Efficient Computing for AI at the Edge

Running advanced AI on resource-constrained platforms.

The Focus

Most AI applications assume access to data centers with unlimited power and cooling. That assumption fails in our operating environments, where communications are denied, and bandwidth is limited. However, calibrated autonomy demands real-time inference and optimization that can run within the platform's hardware constraints. Autonomous vehicles operate on limited power budgets and are subject to strict thermal, weight, and volume constraints. If compute overhead compromises range, payload, or survivability, the capability doesn't deploy.

We're co-designing hardware and software to enable autonomous systems to run sophisticated AI algorithms at the edge—whether on a hand-launched Group 1 UAS or a collaborative combat aircraft operating in contested airspace.

Core Objectives

Contact UsDesigning algorithms and silicon as one system

Treating compute as an integrated architecture, not an afterthought

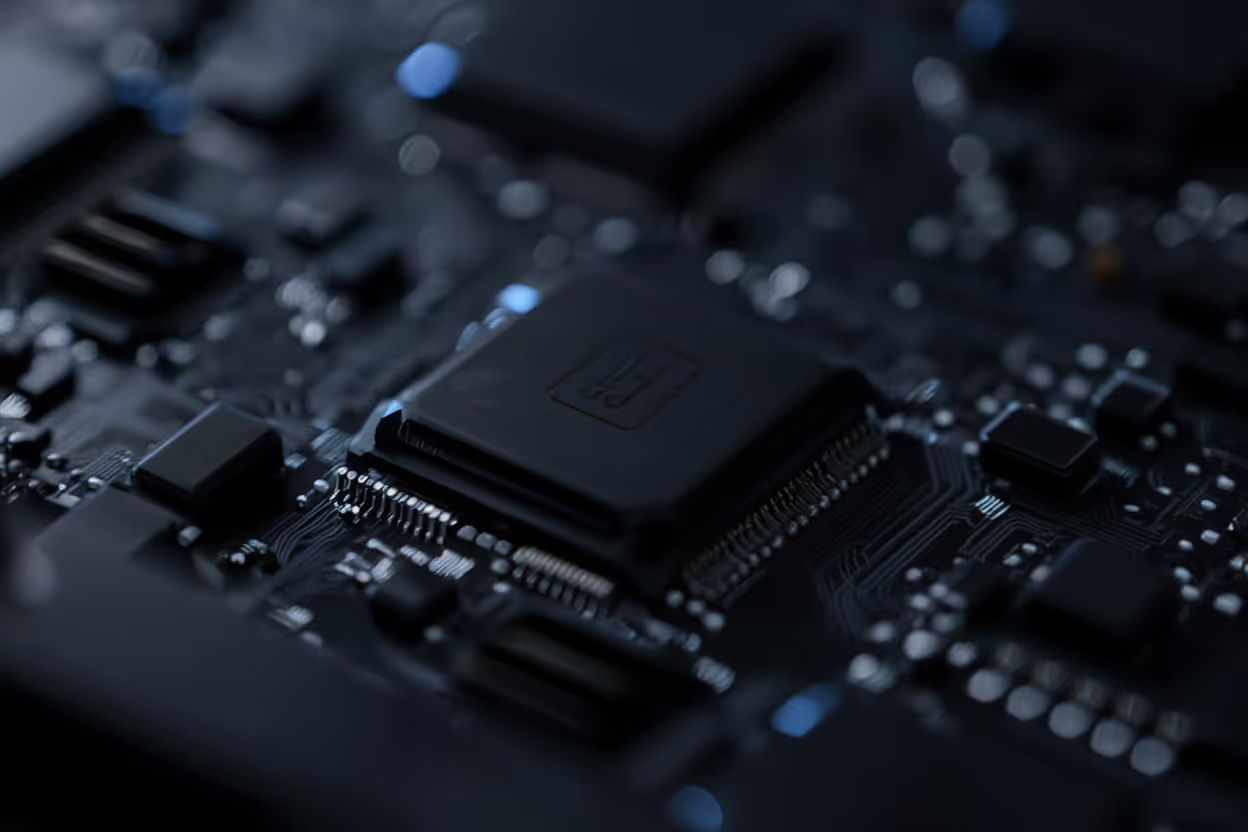

Most AI pipelines are written first and forced onto hardware later. Edge autonomy cannot afford that separation. Hardware–software co-design begins with mission constraints — power draw, latency, thermal envelope, and weight — and builds algorithms that are native to the platform rather than adapted to it. Neural architectures, memory layouts, and instruction pipelines evolve together so that every watt of power produces operational capability instead of overhead.

The technical challenge is coordinating across traditionally siloed domains. AI researchers, embedded systems engineers, and hardware architects must optimize toward a shared objective function: maximum autonomy per joule. Co-design workflows rely on simulation-driven profiling, hardware-aware model training, and tight feedback loops between firmware and algorithm development. The result is not just faster inference, but systems engineered from the ground up to operate within unforgiving edge constraints.

Breaking free from conventional architectures

Exploring hardware that bends the scaling curve.

Traditional CPUs and GPUs were not designed for edge autonomy. Emerging compute paradigms — neuromorphic chips, photonic accelerators, and analog inference hardware — promise orders-of-magnitude improvements in energy efficiency by matching hardware physics to AI workloads. Neuromorphic systems mimic biological neural dynamics for ultra-low-power event processing, while photonic compute leverages light-speed parallelism to accelerate matrix operations with minimal heat.

The challenge is integrating these experimental architectures into operational systems. Toolchains, programming models, and verification frameworks must mature alongside the hardware. Edge autonomy demands reliability, determinism, and predictable failure modes — qualities that cannot be sacrificed for performance. Success requires bridging cutting-edge research with field-ready engineering so that novel compute moves from laboratory curiosity to deployable capability.

Shrinking models without shrinking performance

Precision engineering for constrained deployment

Large AI models contain redundancy by design. Compression techniques exploit that redundancy to reduce footprint while preserving functional behavior. Pruning, quantization, knowledge distillation, and weight sharing transform heavyweight models into edge-ready variants that maintain mission-critical accuracy. The objective is not minimal size but optimal efficiency for the task.

Optimization must occur across the entire inference stack. Compiler-level transformations, memory scheduling, and hardware-specific acceleration ensure that compressed models translate into real-world gains. Compression techniques applied in isolation, without accounting for hardware constraints and autonomy stack requirements, fail to deliver real-time performance on SWaP-C-constrained platforms. Effective pipelines integrate distillation, pruning, and quantization with hardware-in-the-loop validation, ensuring compressed models remain performant as mission requirements and hardware targets evolve.

Applications

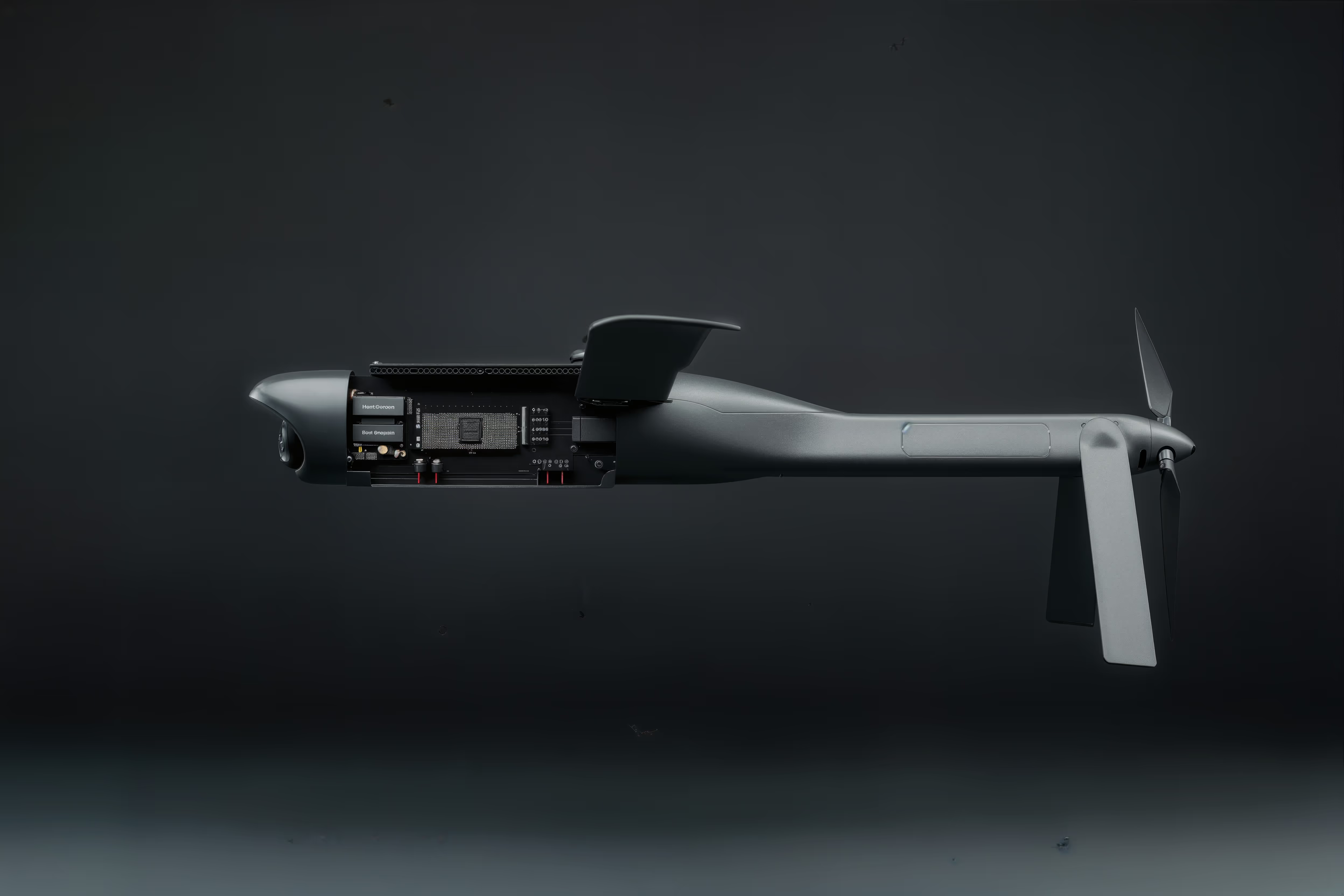

Group 1 UAS

A hand-launched drone with a limited power budget must run visual navigation and threat detection algorithms without draining its battery in minutes. Efficient compute enables extended mission duration without compromising autonomous capability.

Collaborative Combat Aircraft (CCA)

A loyal wingman must allocate compute between mission autonomy, sensor fusion, and electronic warfare – all within thermal and power constraints that don't compromise range or payload. Hardware-software co-design ensures optimal resource utilization.

Long-Endurance ISR

A platform operating for 12+ hours in a contested environment needs energy-efficient compute that doesn't require continuous cooling or external power sources. Compute efficiency directly translates to mission persistence.

What We're Looking For

The AI Studio doesn’t issue RFPs; instead, we rely on researchers and technologists working on problems in this space who see potential alignment to reach out to us. If you're working on something adjacent to these areas, reach out today!

Energy-efficient AI architectures for edge deployment

Hardware-software co-design for resource-constrained systems

Novel compute architectures (neuromorphic, photonic, analog)

Real-time inference on constrained hardware

Thermal management for edge AI systems

Explore other lines of effort

Explore our ThesisFrequently Asked Questions

Why can't we just use cloud-based AI for these systems?

In a near-peer conflict, connectivity is likely the first thing to go. Relying on a cloud link creates a single point of failure. For a system to be truly autonomous, it must have onboard processing power to analyze complex data and make decisions locally.

What is “hardware-software co-design”?

Traditional AI development often ignores the hardware it runs on until the very end. We do the opposite. We design the compute architecture and the software algorithms simultaneously. By optimizing the math to fit the specific silicon, we can run computationally expensive algorithms (like mini LLMs) on hardware that would otherwise be too slow or power-hungry.

How do you handle SWaP-C (Size, Weight, Power, and Cost) constraints?

Every watt of power used for compute is a watt taken away from flight time or payload. Our research focuses on energy-efficient inference, ensuring that the AI can think deeply without draining the battery. We prioritize architectures that deliver maximum “intelligence per watt.”

Can you really run a Large Language Model (LLM) on a drone?

We are developing miniaturized, specialized LLMs and vision models for embedded computers. These are task-specific models that enable a vehicle to understand and interact with its environment in ways previously possible only on massive server racks.

Operators

DAF-Stanford AI Studio exists to solve real operational problems alongside the people facing them. If you are encountering capability gaps at the edge and need autonomy that works under pressure, we are ready to partner with you. Our focus is to turn urgent field needs into deployable systems that directly strengthen the mission.

Academia

DAF-Stanford AI Studio exists to translate frontier research into real operational impact. If you are advancing new methods in autonomy, AI, or simulation and want to see that work tested in high-stakes environments, we are ready to collaborate. Our goal is to move promising ideas from the lab into systems that matter.

Industry

DAF-Stanford AI Studio exists to build deployable autonomy with people who solve hard technical problems. If you are developing AI systems that must perform under real-world constraints — power limits, contested environments, imperfect data — we are ready to work alongside you and turn that capability into operational systems.